|

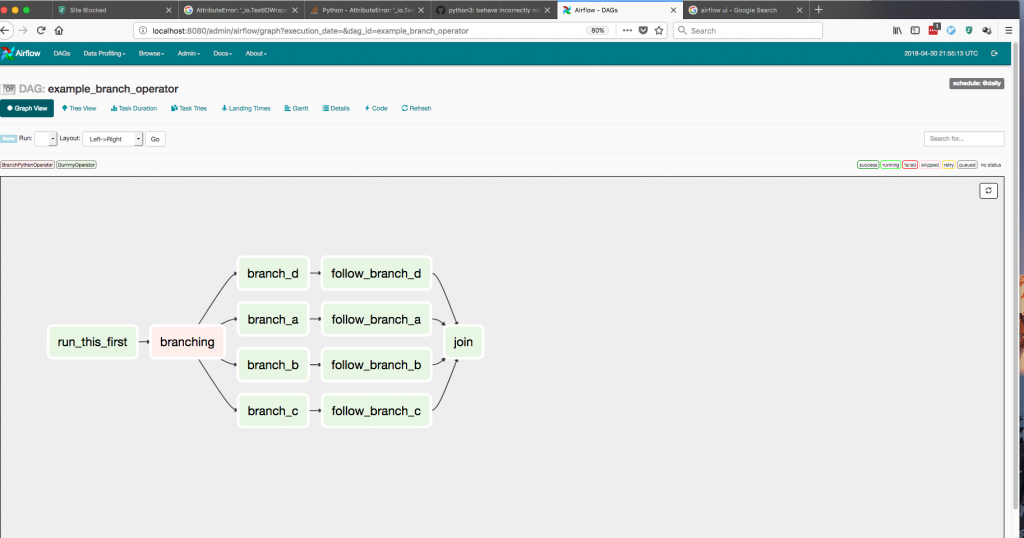

9/15/2023 0 Comments Airflow docker kubernetes   This talk is aimed for Airflow users who would like to make use of all the effort. airflow connections get .But usually one just look around for useful snippets and ideas to build their own solution instead of directly installing them. There are many attempts to provide partial or complete deployment solution with custom helm charts.  The easiest way to get the URI is create the connection in the Webserver UI in the normal way and then after, SSH into the running webserver container and execute the command. Airflow and Kubernetes are perfect match, but they are complicated beasts to each their own. Azure App Service for Linux is integrated with public DockerHub registry and allows you to run the Airflow web app on Linux containers with continuous deployment. Individual task execute as a part of LocalExecutor inside k8s. Puckel’s Airflow docker image contains the latest build of Apache Airflow with automated build and release to the public DockerHub registry. Starting from official container image, through quick-start docker-compose configuration, culminating in April with release of the official Helm Chart for Airflow. Airflow supports setting connections via URI only in the helm chart. airflow Share Improve this question Follow edited at 15:43 user4157124 2,751 13 26 42 asked at 13:45 Nitesh Sharma 545 3 14 is this the right behavior -> Yes this is correct. Over the last year community members made an enormous effort to provide robust, simple and versatile support for those deployments that would respond to all kinds of Airflow users. When a DAG submits a task, the KubernetesExecutor requests a worker pod from the Kubernetes API. KubernetesExecutor requires a non-sqlite database in the backend. The scheduler itself does not necessarily need to be running on Kubernetes, but does need access to a Kubernetes cluster. The full support for Kubernetes deployments was developed by the community for quite a while and in the past users of Airflow had to rely on 3rd-party images and helm-charts to run Airflow on Kubernetes. KubernetesExecutor runs as a process in the Airflow Scheduler. Of course if I curl from my local machine it works without issues.In this talk Jarek and Kaxil will talk about official, community support for running Airflow in the Kubernetes environment. To address this issue, weve utilized Kubernetes to allow users to launch arbitrary Kubernetes pods and configurations. Warning Failed 49m (圆 over 50m) kubelet Error: ImagePullBackOff Warning Failed 49m (x4 over 50m) kubelet Error: ErrImagePull Note: The DAGs are part of the Docker images. Warning Failed 49m (x4 over 50m) kubelet Failed to pull image "127.0.0.1:5001/my-dags:0.0.1": rpc error: code = Unknown desc = failed to pull and unpack image "127.0.0.1:5001/my-dags:0.0.1": failed to resolve reference "127.0.0.1:5001/my-dags:0.0.1": failed to do request: Head "": dial tcp 127.0.0.1:5001: **connect: connection refused** Build and push your Airflow docker image Create a Kubernetes ConfigMap to store all the environment variables Deploy Airflow scheduler and webserver Connect to Airflows webserver UI Build and push your Airflow Docker image. Normal Scheduled 50m default-scheduler Successfully assigned default/apache-airflow-run-airflow-migrations-bbfd8 to airflow-cluster-control-plane Helm deployment does not finish and when I run kubectl describe pod I see the following errors: Events: The problem I have however is that there seems to be no network connectivity between the kind container and my local host. I used values.yaml from the official helm chart with just a minor change in the images section: # Images Integrate our DAG with GCP services such as Google Cloud Storage. Install Airflow dependencies and custom operators for our DAG via a Docker image pulled from the Artifact Registry. I created a local Docker registry running on port 5001 (the default 5000 is occupied by macOS): reg_name='registry' Automatically pull our DAG from a private GitHub repository with the git-sync feature. I created my image with the following Dockerfile: FROM apache/airflow:2.3.0  It's pretty straight-forward up to the point where I want to configure Airflow to load DAGs from an image in my local Docker registry. I'm new to Airflow and I'm trying to set it up locally on Kubernetes using the official helm chart with kind.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed